Software Testing Life Cycle: How We Do Software Testing at Azoft

IT copywriter

Reading time:

Having a project released without a decent testing phase is a risk that you can barely calculate. If one critical bug is found, it can cause you to lose an audience, time, and money. It may not be that obvious from the client’s point of view, but in fact, quality assurance is not something to neglect. That’s why we have our own in-house QA department.

We looked back on three big projects we’ve done to show you what quality assurance reps really do here at Azoft.

The first project is an Android app for a logistics and transportation company called CDEK. The second one is an automation system for an amusement park called Lyubogorod. The last but not least is the website builder for Elektronniy Gorod, a local Russian Telco.

Software Testing Life Cycle

The QA department starts working when a client provides requirements for the entire project or the feature we are building. Before testing the software itself our quality assurance reps analyze documentation, specify requirements and participate in project meetings.

We usually do the functional testing paired with non-functional testing. We assure that each and every project meets the requirements specified in the project documentation. We also make sure everything is OK with layout and usability.

Both functional and non-functional testing start right after a feature has passed the coding phase. Let’s take a closer look at each step.

Specifying requirements and writing test documentation

As a rule, we test every project and feature built by our team. Regardless of the project size and complexity, there are always requirements defined by a client and sometimes by our business analysts.

Azoft QA department will first scrutinize and specify the requirements if necessary. The task here is to exclude all possible contradictions and make requirements straightforward and comprehensive.

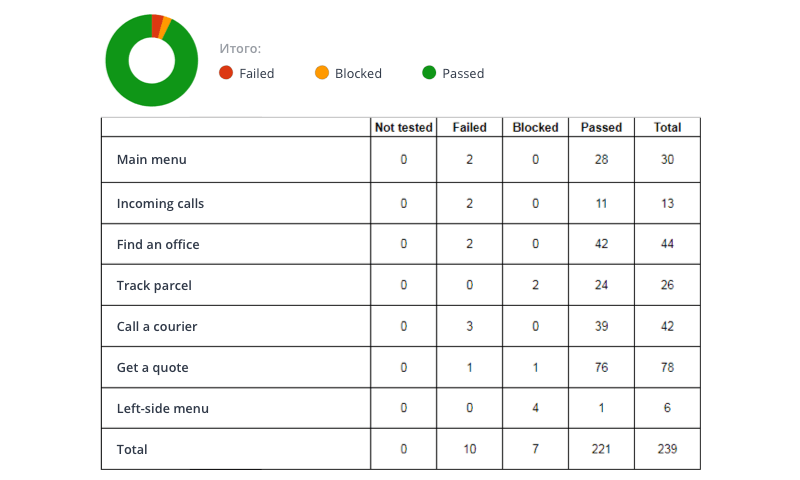

To write test documentation we define a test strategy and select a toolset. For CDEK and Lyubogorod we applied checklists — one of the test documentation types we use in our projects. Usually, the project features and client desires define what test documentation contains and also how it looks.

Once the requirements are specified we start writing test documentation. Sometimes we do it simultaneously. For example, when building apps for CDEK and Lyubogorod we wrote test docs and specified requirements on the go.

Software testing

We do not stick to one testing type and combine various approaches and tactics when working on a project.

Functional testing

All three above-mentioned projects were tested using checklists. A simple document helped us track how systems responded to different input data in a number of scenarios.

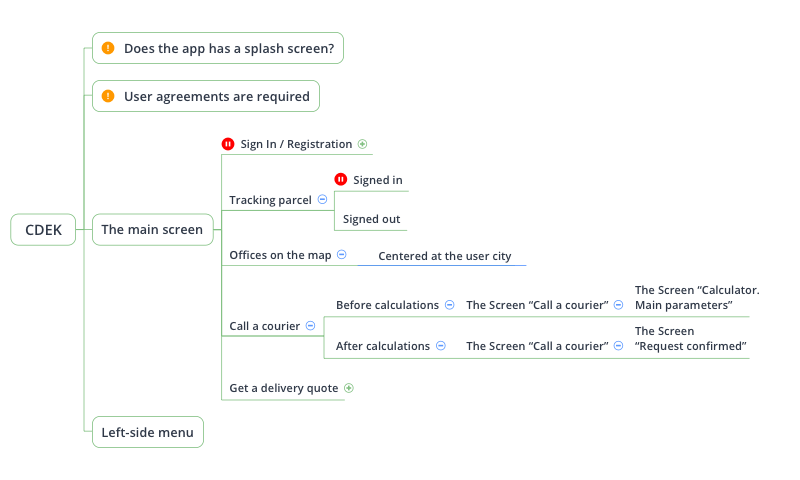

We applied the use case testing when working on the project for CDEK. A use case is a description of a particular use of the system by an actor or user. To make it more user-friendly we used mindmaps by XMind. It enabled us to visualize the system behavior and see the logic behind each use case.

During the testing phase we usually need to access the project database and make changes. The database client software we use is defined by a database type. For example, we used HeidiSQL while working on the project for Lyubogorod to manage a MySQL database.

Non-functional testing

We test the layout on multiple operating systems, OS versions and mobile screen resolutions. Web apps are tested in different browsers and on different operating systems as well.

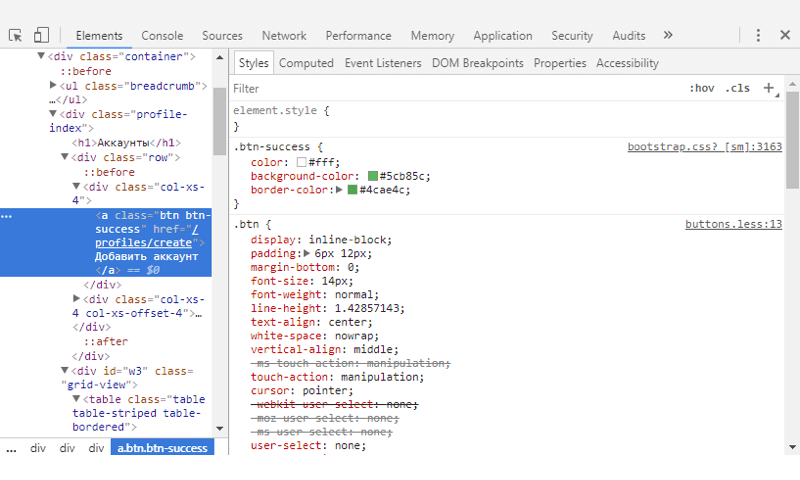

Chrome Developer Console and the Firefox Firebug Console are simple yet powerful tools that enable software testers to assure layout quality.

We always make sure a project is developed in accordance with its wireframes and design specifications. Aside from this, we double check that apps meet the requirements specified in Apple and Google style guides. The experience of a QA rep is crucial here: they can help avoid pitfalls in UI and help improve the app UX.

We performed interoperability testing for CDEK and Lyubogorod. The first task was to find out how the system components intercommunicate. The other task was to make sure the entire system can integrate with external systems seamlessly.

The application programming interface is a part of a mobile app we like to test at the very beginning of a development phase. Postman helped us to perform API testing for CDEK and Lyubogorod.

All three projects required us to make sure the client-server interaction is up and running. Thus we needed a tool to capture and review traffic. Fiddler helped us to make sure the data is displayed correctly on the client side.

Additional testing types

Every project defines what kind of testing it will require. For example, load testing is a must when a system is most likely to work under heavy traffic. In such cases, the client usually provides the requirements specs and we measure a system’s behavior under both normal and anticipated peak load conditions.

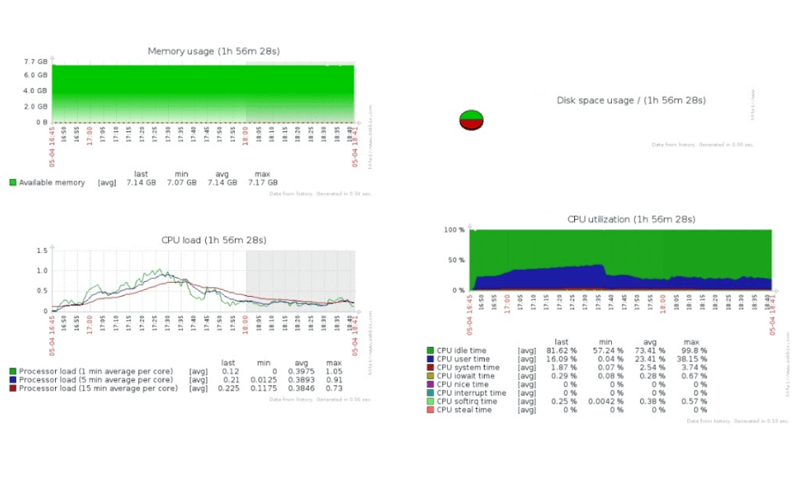

Load testing was part of the testing services we provided for Elektronny Gorod. First, we defined the use cases to be tested. It appeared to be the main page visited by a huge number of concurrent users.

We used Jmeter (a performance testing tool by Apache) for use case testing. After setting up the testing environment we prepared scenarios and ran multiple tests on different remote machines in parallel.

Then we used Zabbix for system monitoring and tracked a system behavior with developers. We identified errors, optimized the system and ran tests again and again until the system met the load testing requirements specs. Test results were transformed into a report and shared with the client.

The functional & non-functional testing phase resulted in tasks created in a bug-tracker. We also provided a detailed description and suggested some improvements.

Bug fix verification and acceptance testing

This is a phase when we make sure all bugs in software were fixed and no more bugs come up. Then we check the functionality to ensure it hasn’t changed.

Before delivering a project we check the system availability by performing either an acceptance or a smoke testing.

Acceptance testing enables you to evaluate the system’s compliance with the business requirements and assess whether it is acceptable for delivery. Smoke testing just ensures that the most important system functions work.

Summing Up

You’ve learned about software testing life Cycle at Azoft. The steps are usually the same but the testing types may be absolutely different. Apart from interoperability testing, load testing, performance testing, acceptance testing and smoke testing our services include security testing, exploratory testing, test automation and whatever else your project needs.

By outsourcing software development to Azoft, you make sure your project will be tested in-house. No need to find other contractors solely for software testing purposes. Azoft quality assurance reps will make it faster and better working together with our development team.

Comments