Main/Portfolio/Augmented Reality Dancing App Developement

OriflameAugmented Reality Dancing App Developement

Starting Up

In spring 2017 we started working for Oriflame as a subcontractor. Oriflame is a leading beauty company selling direct to the public. Imagine Apple selling cosmetics, that’s how big they are. The development phase started in April. Our client was in charge of everything related to the business logic and design, we at Azoft were solely focused on mobile application development.

The initial idea was to develop an Augmented Reality application. After we scrutinized the project specifications, we realized that it would be overkill to build an AR app. We shared our thoughts on the subject with the client and told them about possible alternatives. After the evaluation, we decided to keep it simple and utilize video filters as they were fully capable of what we were looking for.

About the app

When first launched, the app offers a quick onboarding. A simple yet crucial training process that helps users learn the Oriflame dance. Throughout the training process, users learn from a famous dancer. The video lesson is separated into 18 short segments to make the training more convenient and effective. Users are then given an option to choose between two celebrities to dance with. Users dance with their idol and record themselves on video at the same time. The last and the most important step is social sharing. This is how users introduce their dancing videos to the community.

June 17, 2017, was the date our client launched a major promo campaign — a dancing flash mob. The app we built was the marketing tool that helped Oriflame engage their audience and gather thousands of people in one place. Those who attend the flash mob were expected to download the app in advance. So there was no chance the release date could be changed somehow. The project schedule was met and everything was done as it should have been. However, there were a couple of bugbears worth mentioning.

Working on iOS app

It took us 3 months to build an iOS app. Despite the unexpected changes, we met the timeline. The app was written in Swift, the more advanced and convenient language compared to Objective C. What we got, as a result, was a simple and user-friendly mobile application that was surprisingly complex to build.

At the very beginning, we got this gut feeling that something is going to be wrong with video playback. And that’s exactly what happened. We faced some issues with compression artifacts, video overlay, audio and video mistiming.

When choosing between native and cross-platform development, we knew from past experience that a hybrid application wouldn’t fit well. At first glance, there was nothing complex in this project. But the reality is a cross-platform app couldn’t handle the tasks we had. We understood there would be bugs. The type of bugs that are costly to fix and that never appear when you build a native app. That’s why we started building native apps both for iOS and Android platforms.

Finding the right framework for video processing became the cornerstone of the entire mobile development process. We were looking for a proper tool that can help us manage the video overlay. The only one that worked for us was open-source GPUImage. Other frameworks didn’t meet our expectations. All in all, we were lucky to find and utilize GPUImage. Written for iOS, the framework didn’t require any additional changes or updates. The only thing that let us down was insufficient documentation.

Working on Android app

Working on the Android application was much more challenging, but we managed to finish the work faster — two months after we wrote the first line of code. During the development phase, we tinkered with the GPUImage a lot. The thing is that this library supports no other platform except iOS. But we managed to use it for Android. Of course, it was not a solution taken out of the blue. We analyzed all possible scenarios beforehand and chose the best one. The effort estimation indicated that rewriting GPUImage for Android was the best option.

That being said, we needed to lay videos with dancing celebrities over the user’s’ footage. To manage chroma key overlay we wrote a special video filter. Multiple tests showed that video overlay caused a performance reduction. To speed things up we applied a hardware acceleration to every overlay process.

The Android app had the same issues with video playback as the iOS one. Luckily for us, the guys from the iOS department were a huge help by getting things done blazingly fast. Looking back at the development stage, it wasn’t easy at all, but really useful for future projects. For example, we didn’t work with hardware acceleration much before. After the project, we made sure this technology is more than just helpful when it comes to managing heavy processes.

Our client was happy to see the work delivered in time. The promotional campaign was launched on June 17, as planned.

The final product

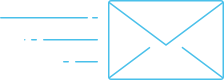

The app we built is simple and user-friendly. It encourages users to shoot a video and share it in social networks. Look at the application screens where users take key actions.

The training for users to learn and practice

Selecting a dancing partner

Shooting a video

Adding effects

Social sharing

Summing Up

This project was an example of teamwork as it should be. The client provided an app design, we helped with wireframing. Maximum mutual efforts were put together to create an exceptional UX. Both the client and our team suggested changes to improve the user experience.

The entire process wasn’t smooth. But we polished our OpenGL skills, mastered hardware acceleration and learned how to rewrite iOS libraries for Android development.

All the hassle around the app were justified by the business outcome it gained. The number of downloads was expected to be between 2000 and 3000 for both platforms by the moment the flash mob starts. But it turned out to be 5000 installs only from Google Play. Total installs of Android and iOS versions exceeded 19000. The audience reach in social networks is also amazing. The app has hit 5000 postmark, and the top 3 Instagram posts had 300000 views total. The Google Play rate is 4,6, meaning that the audience loved the app.

Related projects

-

Expi

Learn more -

UK Software Provider

Learn more -

Apphuset

Learn more