Main/Portfolio/AI-Driven EdTech Solutions For Online Art School

ProkoAI-Driven EdTech Solutions For Online Art School

Businesses use AI to handle issues in various domains. Neural networks are used to recommend products in online stores, diagnose serious illnesses, manage financial portfolios, communicate with customers and process their requests, recognize speech and help build routes to avoid traffic jams.

AI has become so ubiquitous that it’s even shaking up the art world, one that has always been considered to belong to humans alone. For instance, Nvidia’s latest AI software turns rough doodles into realistic landscapes. This prototype software shows how AI has the potential to augment art.

Azoft has also been active in this field. We completed several projects at the intersection of art, learning and artificial intelligence technologies. Read on to learn about our EdTech solutions, some challenges we faced, and the outcome.

In 2018, Californian artist Stan Prokopenko turned to Azoft. Being a teacher and a YouTuber as well, he founded Proko — a popular educational resource for artists to get art instruction videos.

Eventually, the number of students increased significantly and it started to take a long time for Stan to check his students’ drawings. As an artist, teacher, and entrepreneur who keeps an eye on tech trends, Stan was wondering how to combine art, learning, and artificial intelligence to automate routine processes. He asked Azoft to solve this issue.

The first project: portrait rating

Stan was curious about using neural networks to automate creative tasks. He decided to try to make artificial intelligence distinguish the skill level of portrait drawings and rate them accordingly. For this task, the client collected a dataset of about 1,000 drawings and rated them. Our R&D engineers used this dataset to train the neural network to rate drawings of people on a 5-point scale – from rough sketches to fully completed pictures.

The second project: perspective correction

Stan was glad that artificial intelligence could be used to help teachers. But, he wondered, could it be useful to students, too?

Novice artists have a common problem with perspective drawing. The client asked Azoft to create a prototype that finds vanishing points and checks whether an artist drew perspective correctly. The edges of all objects would converge in these points if perspective was drawn correctly. To check for this, we applied a heuristic mathematical algorithm. It detects all drawn lines and converts them into an abstract coordinate system where each line turns into a point. Next, the algorithm analyzes groups of such points stretched in a line — linear cluster. Then it draws lines through linear clusters which help to identify vanishing points. As a result, we produced solution that quickly checks drawings of streets.

The third project: parallelepiped drawings correction

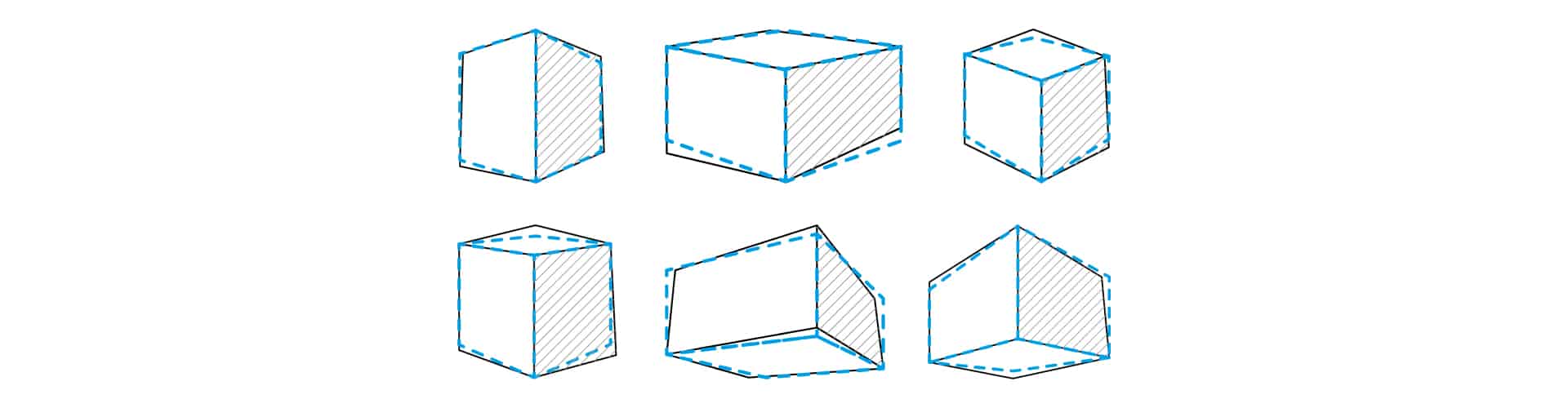

Stan liked the result of the first two projects. After careful consideration, he came up with the idea of creating an e-learning tool for novice artists that would closely align with his business goals. Beginners in drawing usually practice to build perspective for simple objects like parallelepipeds. So, our task was to create an app that automatically corrects errors in parallelepiped drawings in terms of a one-, two-, and three-point perspective.

To complete this goal, we had to address a few challenges.

- Insufficient input data for the heuristic mathematical algorithm.

We already completed the task of checking the perspective in the second project. However, at that time, we had enough input data for the heuristic mathematical algorithm as the drawings of streets contain many lines. In the new project, we had to deal with drawings of parallelepiped that contain only a few lines. This is why we reconsidered our approach and opted for neural networks. To expedite dataset collection, we created a tool that generates drawings. The tool draws parallelepipeds in all types of perspectives using different backgrounds.

- Imperfect conditions.

Imagine that two art school students, George and Anna, received a task to draw a parallelepiped and to submit their drawings to their teacher. George completed the task with diligence. He drew it carefully using a pencil and white paper. He took a photo of the result in daylight, holding the camera in parallel to the surface of the table. He cropped the photo so that the parallelepiped would be in the center. Anna, on the contrary, left the task till the last minute. She had a pen and a lined notebook at hand. Before sending the drawing, she checked her work and corrected a couple of lines by highlighting them and leaving a note.

George and Anna did the same task differently. Therefore, we had to foresee such conditions to ensure that the algorithm would recognize both drawings successfully.

As a result, using neural networks, we created an algorithm that works in accordance with the following steps:

- With the help of a friend-or-foe approach, it determines whether there is a parallelepiped in the picture.

- If there are any other objects besides the parallelepiped, the algorithm won’t “consider” them.

- Then it finds a parallelepiped, selects several edges, analyzes them, and identifies what type of perspective is used.

- Using the reference edges and information about the type of perspective, the algorithm draws the correct position of the edges. If these edges match with those drawn by a student, the drawing is correct. If not, there is a mistake, and the algorithm shows corrections.

Sometimes, it can be challenging to define what type of perspective students initially had in mind when drawing. Such drawings can be interpreted and therefore corrected differently, for example, as both two-point and three-point perspective. To handle this case, we decided to add an option to let students indicate what type of perspective they drew before running the algorithm.

Moreover, we built a web application for students to check their work online. It is a page where users upload a photo of their parallelepiped drawing and indicate the type of perspective. Next, the algorithm processes the input data. As a result, the app shows the corrected picture.

Together with the client, we are launching a beta version to collect more data about how real students draw and diversify our dataset accordingly. After the students upload and see the corrected versions of their work, the app asks them to give feedback whether they think the correction is relevant or not by leaving a rating from 1 to 5.

The original and corrected images are stored in the admin panel. The data on the processing speed of each image and the ratings assigned by the students is stored here too. This data will help to decide whether we need to improve the performance of the algorithm.

Our current goal is to collect feedback from the first users, analyze the new dataset, and decide on how to further improve the algorithm based on the feedback we receive.

The Outcome

Using machine learning and neural networks, we developed EdTech solutions that:

- rate drawings on a scale from 1 to 5

- check if the perspective is correct

- suggest corrections to parallelepiped drawings

For the algorithm that automatically corrects errors in parallelepiped drawings, we created a web application that helps students get their work checked in a couple of clicks.

The developed algorithms save teachers’ and students’ time by checking for and correcting the standard errors of novice artists. The tool will be integrated into an e-learning platform. Check the testimonial Stan gave to Azoft on Clutch.

Now, we are going to increase the app’s performance and accuracy and focus on improving its recognition of wobbly lines. To do this, we will train the algorithm based on real students’ drawings.

Stack

point_alignments, kNearestNeighbors, Gaussian Mixtures, Python, Yii2, Bootstrap, MySQL, AWS

Stack

-

Yii2

-

mySQL

-

Bootstrap

Related projects

-

Private Investor

Learn more -

Apphuset

Learn more -

Azoft WebChat

Learn more